At 4:23 AM ET on March 31, 2026, a security researcher spotted something in the latest Claude Code npm package that shouldn’t have been there: a source map pointing to 512,000 lines of Anthropic’s proprietary code.

Within hours, the code was mirrored across GitHub. Over 41,500 forks later, Anthropic’s “AI safety company” reputation had a new problem: their own internal blueprint was now public.

WHAT HAPPENED

Version 2.1.88 of Claude Code shipped with a 59.8MB source map file. That file pointed directly to a zip archive on Anthropic’s Cloudflare R2 storage — no authentication required.

Security researcher Chaofan Shou noticed the file, downloaded the archive, and posted the link on X. The post hit 3.1 million views within hours. GitHub mirrors accumulated 5,000 stars in 30 minutes.

Anthropic’s response: “This was a release packaging issue caused by human error, not a security breach.”

WHAT WAS INSIDE

The leak revealed 44 built-but-unreleased features, hidden behind feature flags. The most significant:

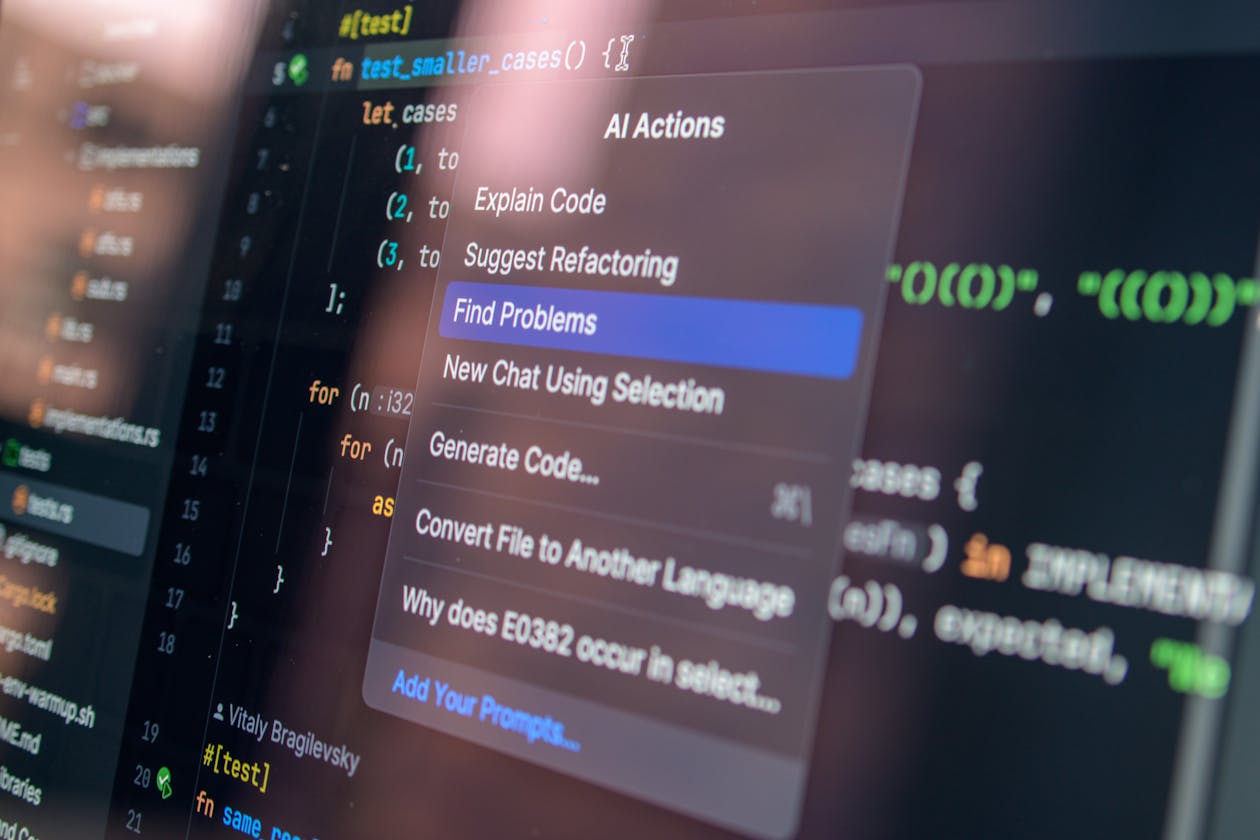

KAIROS: The Autonomous Agent Mode

The headline discovery. KAIROS is an always-on background agent that continues working while you’re idle. Not “AI as tool” — “AI as collaborator.” Current AI waits for your prompt. KAIROS watches, thinks, and acts on its own.

This isn’t a concept. It’s built code, sitting behind a feature flag, ready to ship.

Undercover Mode

A feature that automatically strips AI attribution from git commits. When Claude Code writes code, Undercover Mode makes it look human-written in public repositories.

The irony: Anthropic, the “AI safety company,” built a feature specifically designed to help people pass off AI work as human work.

Coordinator Mode

One Claude instance spawning multiple worker agents in parallel. Multi-agent orchestration, ready to go.

Anti-Distillation Fake Tools

Fake tool definitions designed to confuse anyone trying to distill Claude’s capabilities. Anthropic anticipated competitors trying to reverse-engineer their model — and built defenses.

Native Client Attestation

DRM for API calls. The native Claude Code client authenticates itself to Anthropic’s servers, preventing unofficial clients from using the API at full rate.

The April Fools’ Tamagotchi

A fully-built virtual pet system with 18 species, rarity tiers, and cosmetics. Someone at Anthropic has a sense of humor.

THE IRONY TABLE

| What Anthropic Says | What the Code Shows |

| “We’re an AI safety company” | Built Undercover Mode to hide AI attribution |

| “We prioritize responsible deployment” | 44 unreleased features sitting behind flags |

| “Security is fundamental” | Left source map in npm package (second time in 13 months) |

WHAT HAPPENS NEXT

Anthropic faces a choice:

Ship fast: Release KAIROS and other features before competitors clone them. Risk: looks like they were hiding capabilities.

Ship slow: Continue gradual rollout. Risk: PR disaster explaining why “safety” means not releasing working autonomous agents.

Do nothing: Let the internet keep the code. Competitors now have a detailed playbook of how Anthropic builds AI coding tools.

THE BIGGER PICTURE

This isn’t about one leak. It’s about the gap between what AI companies have built and what they’ve released.

When 512,000 lines of code reveal autonomous agents, multi-agent orchestration, and attribution-scrubbing modes — all ready to go — it shows how much capability is sitting behind feature flags, waiting for the right moment.

The “AI safety” framing works when you’re releasing less than you’ve built. It stops working when the code shows you’ve built more than you’ve released.

THE NUMBERS

- 512,000+ lines of TypeScript code exposed

- 1,906 files in the archive

- 44 built-but-unreleased feature flags

- 41,500+ GitHub forks (and counting)

- 2nd time Anthropic made this mistake (February 2025 was the first)

The internet never forgets. And for AI companies, the lesson is clear: your source code is only as safe as your build pipeline.

WHAT THIS MEANS FOR YOU

Autonomous AI agents are coming. KAIROS proves Anthropic has already built them. The question isn’t whether they’ll ship — it’s when, and whether the company that accidentally published its own source code twice can be trusted to deploy agents that act on your behalf.

If the “AI safety company” can’t safely package its own code, what else can’t it safely do?